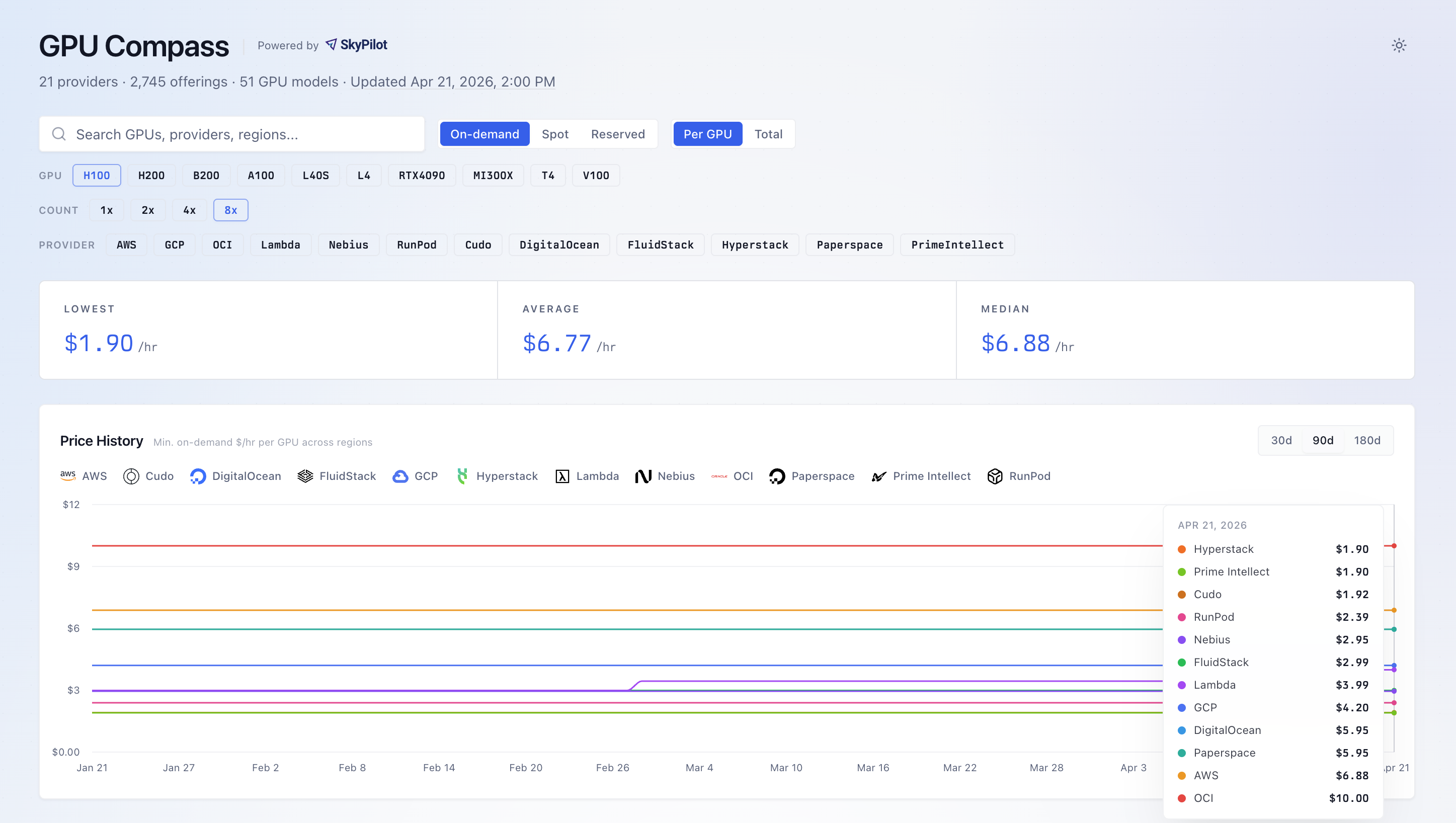

TL;DR: You can’t make good infrastructure decisions if you only see a fraction of the GPU options. GPU Compass tracks the real-time elastic GPU landscape. Try it at gpus.skypilot.co.

You’re building the next big AI company. You need GPUs. The first step is to survey the landscape: which providers exist, what GPU SKUs they offer, in which regions, and at what price.

This turns out to be surprisingly hard.

The GPU Landscape is Bigger Than You Think

Ask most engineers where to get cloud GPUs, and they’ll name three to five providers. AWS, GCP, CoreWeave, Nebius, etc. That’s the mental map.

The real landscape is an order of magnitude larger: over 20 providers, dozens of GPU SKUs, and more than two thousand distinct offerings once you factor in instance types, regions, and pricing models.

In a huge market like this, making infrastructure decisions based on the limited providers you already know means you’re likely missing better options.

GPU Compass: A Real-time View of the GPU Landscape

That’s why we built the GPU Compass: https://gpus.skypilot.co/.

GPU Compass is a real-time, browsable view of the entire cloud GPU landscape. 20+ providers. 50+ GPU SKUs, 2,000+ offerings. Updated every 7 hours from cloud provider APIs.

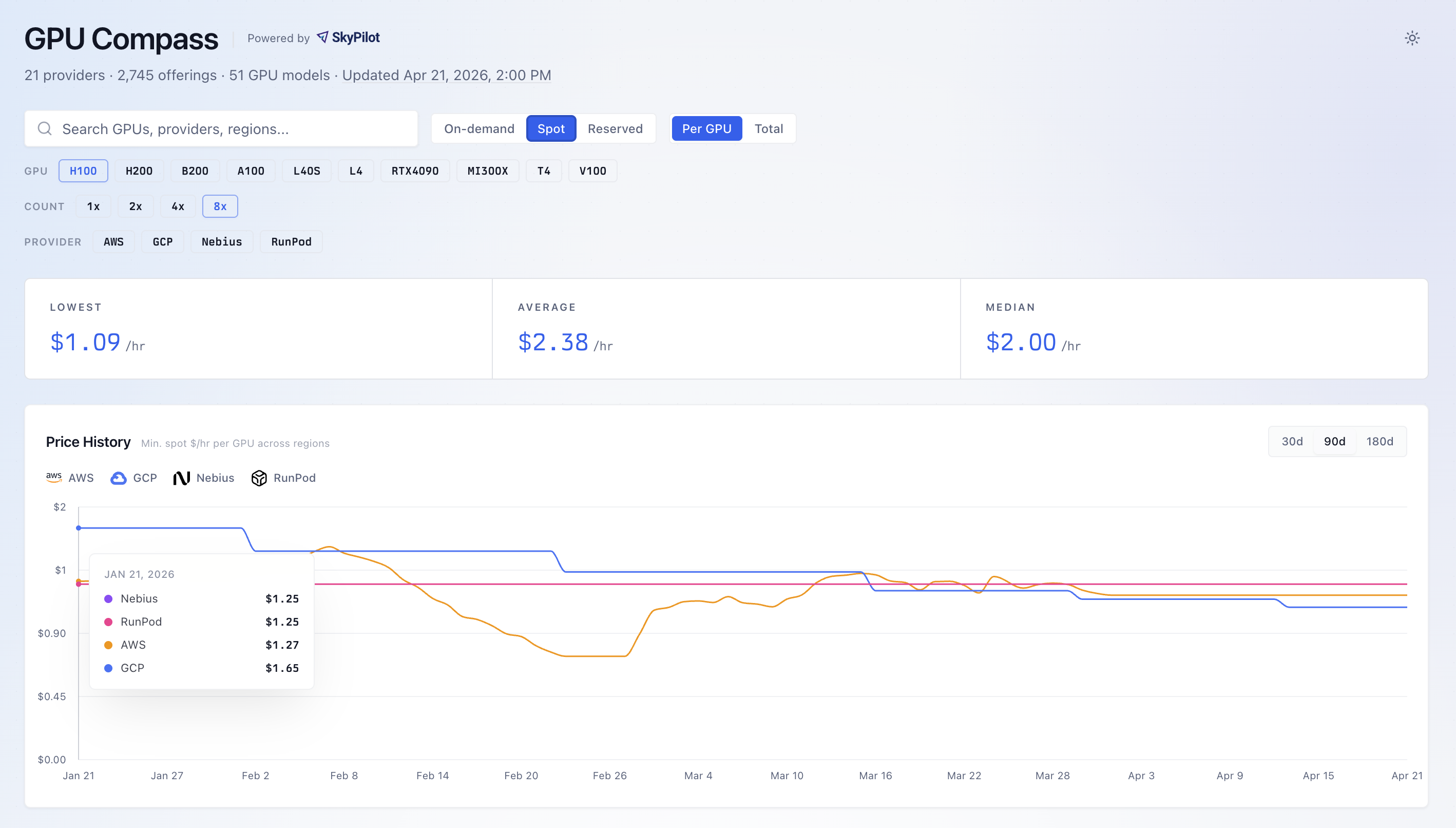

You can browse by GPU SKU, filter by provider or region, and compare on-demand and spot pricing side by side.

On reserved GPU clusters: Reserved pricing is often not published, but users can infer the reserved pricing by assuming a percentage of on-demand (e.g., ~50%).

In practice, most large-scale SkyPilot deployments use reserved clusters.

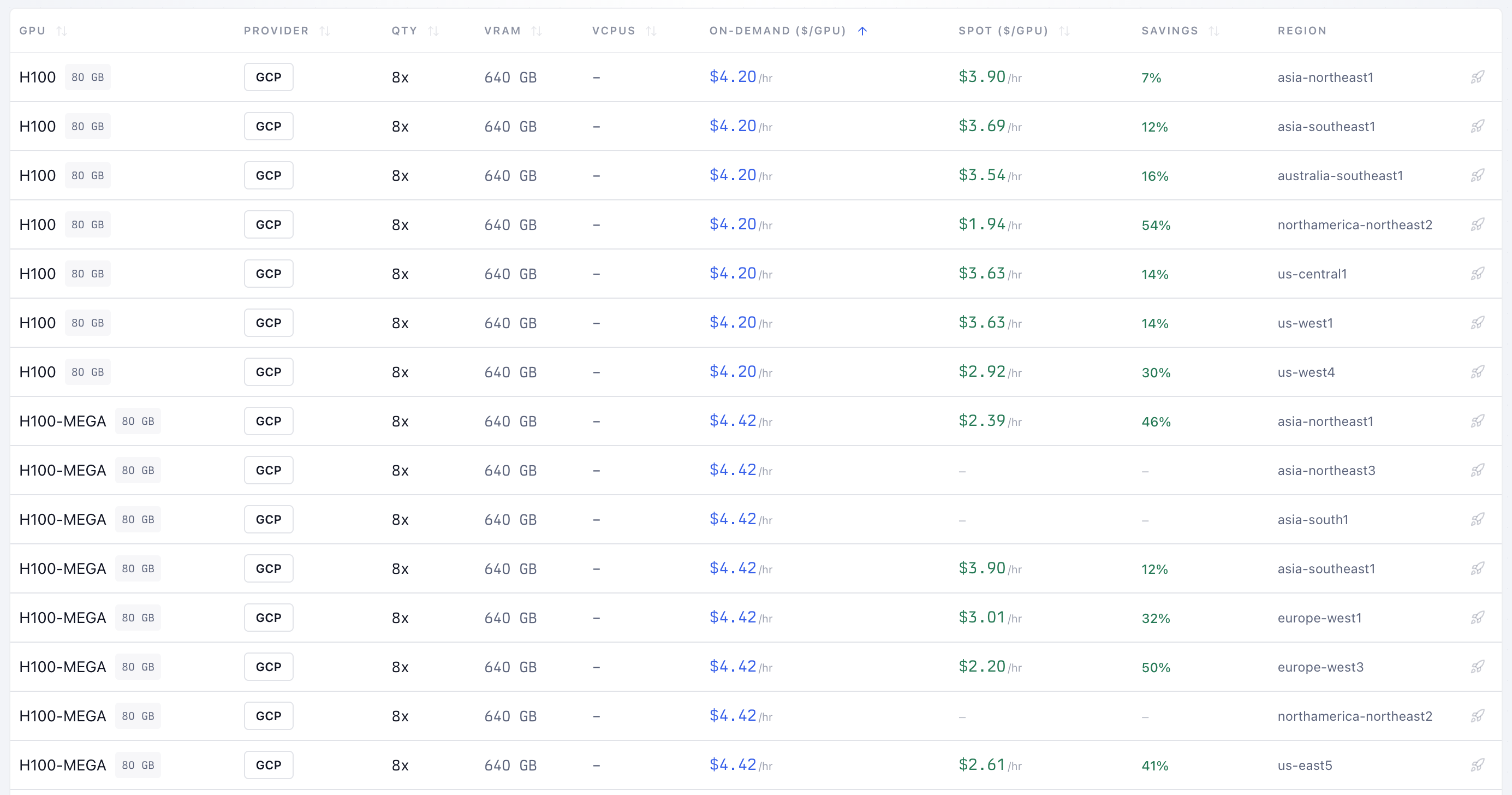

For example, selecting H100:8 on GCP alone reveals on-demand pricing and a comparison of the pricing across regions.

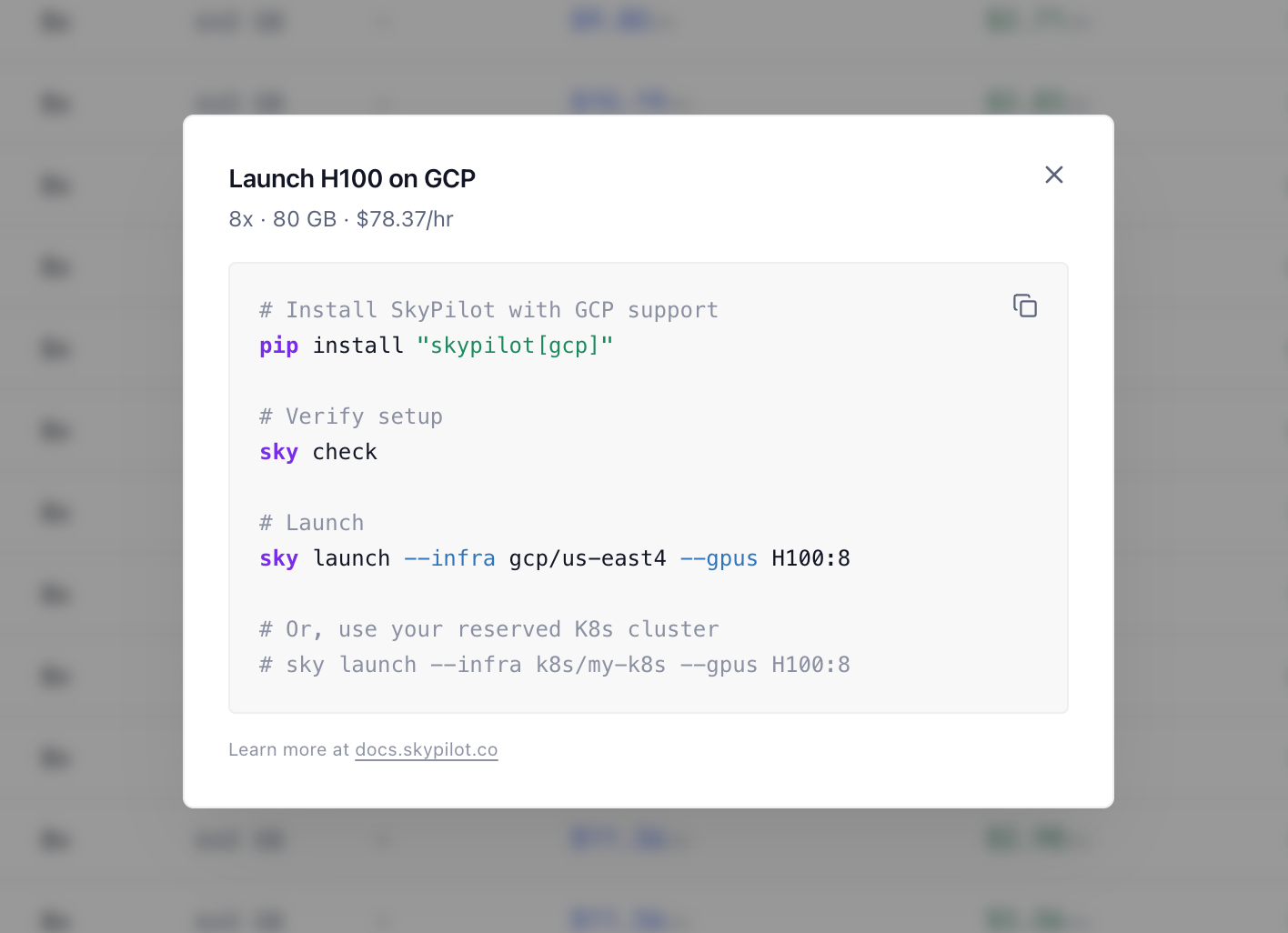

Simply click a row to get the command that spins up a GPU cluster on the provider/region you select:

GPU Compass also tracks historical spot price trends over 30, 90, and 180-day windows, so you can see how volatile a given option really is, not just what it costs right now.

SkyPilot: System for All AI Compute

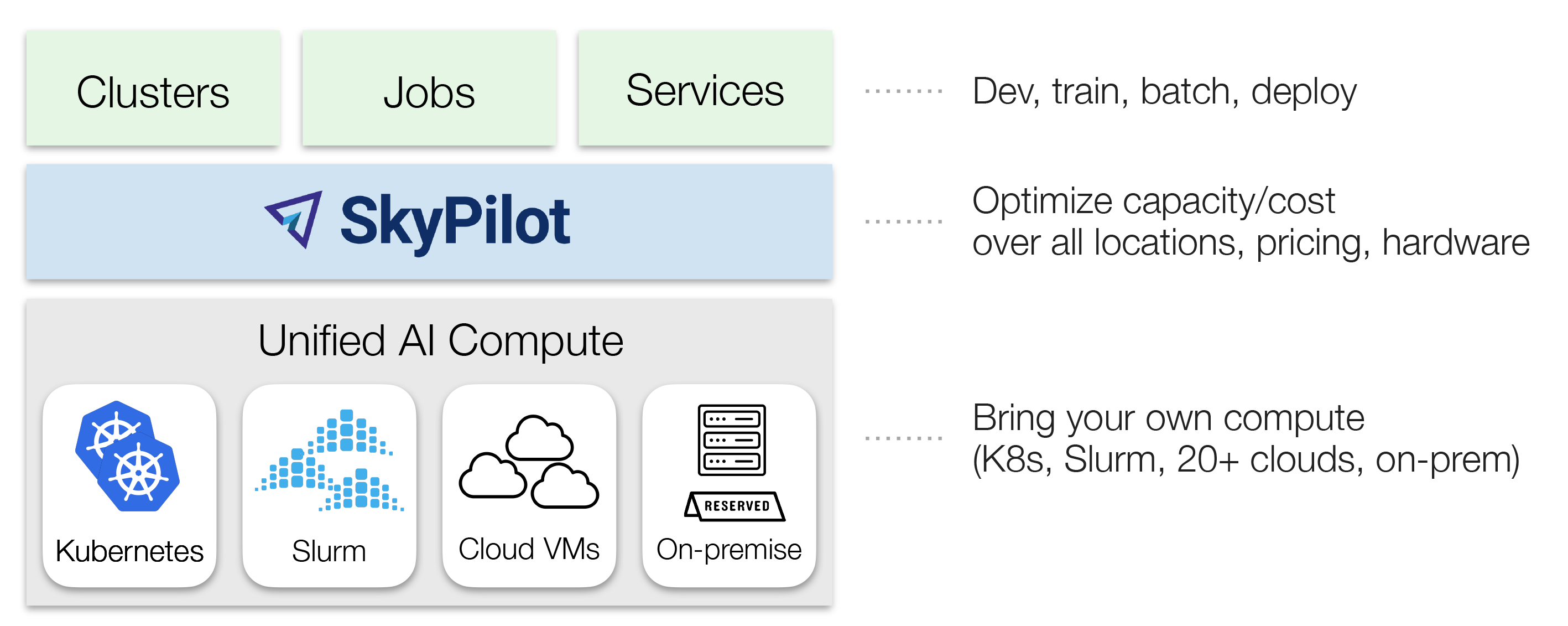

GPU Compass is built by the SkyPilot team. SkyPilot is an open-source framework for running, managing, and scaling AI workloads on any infrastructure: clouds, Kubernetes clusters, and Slurm. One YAML, any infra. Teams at Shopify, Meta FAIR, and others use SkyPilot to access GPUs and run AI workloads wherever they exist.

- If you have reserved Kubernetes clusters or Slurm: bring all of them into SkyPilot to manage them in a unified way (docs).

- If you want to start with elastic VMs: simply have your cloud credentials ready (docs), then click on each offering on GPU Compass to get the launch command, or ask your agent to do so.

Why You Can Trust This Data

The underlying real-time data is maintained in the SkyPilot Catalog: an open-source repo that auto-fetches offerings from cloud provider APIs via GitHub Actions.

Here’s why we think ours is worth trusting.

- Vendor-neutral. SkyPilot doesn’t sell compute. We have no affiliate or reseller relationships with any provider listed on GPU Compass. The only reason this data exists is to power SkyPilot’s orchestrator.

- Open-source and auditable. The entire catalog is open-source and auditable on GitHub: the fetching logic, the raw data, and the auto-update commit, which you can verify the numbers yourself.

- Production-tested. The same catalog routes real training jobs, inference workloads, and batch processing across clouds every day. When a price is wrong, users running real workloads on real GPUs notice. That feedback loop keeps the data honest.

In addition, SkyPilot Catalog has already powered other dashboard projects, such as gpufinder.dev—give them a look.

Discovery is step one. We know price is not the whole picture. Performance, reliability, networking, and support all matter, especially at scale. Benchmarking data is on our roadmap. For now, GPU Compass gives you the broadest, most up-to-date view of GPU offerings and pricing anywhere.

Try GPU Compass Now

- GPU Compass: gpus.skypilot.co

- Orchestrate your AI workloads via SkyPilot: skypilot.co